- What is Artificial Intelligence?

- What is the Turing Test in Artificial Intelligence?

- How does Artificial Intelligence (AI) Work?

- What are the Types of Artificial Intelligence?

- Where is Artificial Intelligence (AI) Used?

- Prerequisites for Artificial Intelligence

- History of Artificial Intelligence(AI)

- AI in Everyday life

- Applications of Artificial Intelligence in Business

- What Makes AI Technology So Useful?

- Career Trends in Artificial Intelligence

- What is the relationship between AI, ML, and DL?

- Examples of Artificial Intelligence

- Future of Artificial Intelligence

- Important FAQs on Artificial Intelligence (AI)

- Further Reading

What is Artificial Intelligence?

Artificial Intelligence is defined as the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings. AI is also defined as,

- An Intelligent Entity Created By humans

- Capable of Performing Tasks intelligently without being explicitly instructed.

- Capable of thinking and acting rationally and humanely.

A layman with a fleeting understanding of technology would link it to robots. They’d say Artificial Intelligence is a terminator like-figure that can act and think on its own.

If you ask about artificial intelligence an AI researcher, (s)he would say that it’s a set of algorithms that can produce results without having to be explicitly instructed to do so. The intelligence demonstrated by machines is known as Artificial Intelligence. Artificial Intelligence has grown to be very popular in today’s world. It is the simulation of natural intelligence in machines that are programmed to learn and mimic the actions of humans. These machines are able to learn with experience and perform human-like tasks. As technologies such as AI continue to grow, they will have a great impact on our quality of life. It’s but natural that everyone today wants to connect with AI technology somehow, may it be as an end-user or pursuing a career in Artificial Intelligence.

How do we measure if Artificial Intelligence is acting like a human?

Even if we reach that state where an AI can behave as a human does, how can we be sure it can continue to behave that way? We can base the human-likeness of an AI entity on the:

- Turing Test

- The Cognitive Modelling Approach

- The Law of Thought Approach

- The Rational Agent Approach

Let’s take a detailed look at how these approaches perform:

What is the Turing Test in Artificial Intelligence?

The basis of the Turing Test is that the Artificial Intelligence entity should be able to hold a conversation with a human agent. The human agent ideally should not be able to conclude that they are talking to an Artificial Intelligence. To achieve these ends, the AI needs to possess these qualities:

- Natural Language Processing to communicate successfully.

- Knowledge Representation acts as its memory.

- Automated Reasoning uses the stored information to answer questions and draw new conclusions.

- Machine Learning to detect patterns and adapt to new circumstances.

Cognitive Modelling Approach

As the name suggests, this approach tries to build an Artificial Intelligence model based on Human Cognition. To distil the essence of the human mind, there are 3 approaches:

- Introspection: observing our thoughts, and building a model based on that

- Psychological Experiments: conducting experiments on humans and observing their behaviour

- Brain Imaging: Using MRI to observe how the brain functions in different scenarios and replicating that through code.

The Laws of Thought Approach

The Laws of Thought are a large list of logical statements that govern the operation of our mind. The same laws can be codified and applied to artificial intelligence algorithms. The issue with this approach, is because solving a problem in principle (strictly according to the laws of thought) and solving them in practice can be quite different, requiring contextual nuances to apply. Also, there are some actions that we take without being 100% certain of an outcome that an algorithm might not be able to replicate if there are too many parameters.

The Rational Agent Approach

A rational agent acts to achieve the best possible outcome in its present circumstances.

According to the Laws of Thought approach, an entity must behave according to the logical statements. But there are some instances, where there is no logical right thing to do, with multiple outcomes involving different outcomes and corresponding compromises. The rational agent approach tries to make the best possible choice in the current circumstances. It means that it’s a much more dynamic and adaptable agent.

Now that we understand how Artificial Intelligence can be designed to act like a human, let’s take a look at how these systems are built.

How does Artificial Intelligence (AI) Work?

Building an AI system is a careful process of reverse-engineering human traits and capabilities in a machine, and using its computational prowess to surpass what we are capable of.

To understand How Artificial Intelligence actually works, one needs to deep dive into the various sub-domains of Artificial Intelligence and understand how those domains could be applied to the various fields of the industry. You can also take up an artificial intelligence course that will help you gain a comprehensive understanding.

- Machine Learning: ML teaches a machine how to make inferences and decisions based on past experience. It identifies patterns and analyses past data to infer the meaning of these data points to reach a possible conclusion without having to involve human experience. This automation to reach conclusions by evaluating data saves human time for businesses and helps them make a better decisions. To learn basic concepts you can enrol on a free machine learning course for beginners.

- Deep Learning: Deep Learning is an ML technique. It teaches a machine to process inputs through layers in order to classify, infer and predict the outcome.

- Neural Networks: Neural Networks work on similar principles to Human Neural cells. They are a series of algorithms that captures the relationship between various underlying variables and processes the data as a human brain does.

- Natural Language Processing: NLP is a science of reading, understanding, and interpreting a language by a machine. Once a machine understands what the user intends to communicate, it responds accordingly.

- Computer Vision: Computer vision algorithms try to understand an image by breaking down an image and studying different parts of the object. This helps the machine classify and learn from a set of images, to make a better output decision based on previous observations.

- Cognitive Computing: Cognitive computing algorithms try to mimic a human brain by analysing text/speech/images/objects in a manner that a human does and tries to give the desired output. Also, take up applications of artificial intelligence courses for free.

What are the Types of Artificial Intelligence?

Not all types of AI all the above fields simultaneously. Different Artificial Intelligence entities are built for different purposes, and that’s how they vary. AI can be classified based on Type 1 and Type 2 (Based on functionalities). Here’s a brief introduction to the first type.

3 Types of Artificial Intelligence

- Artificial Narrow Intelligence (ANI)

- Artificial General Intelligence (AGI)

- Artificial Super Intelligence (ASI)

Let’s take a detailed look.

What is Artificial Narrow Intelligence (ANI)?

This is the most common form of AI that you’d find in the market now. These Artificial Intelligence systems are designed to solve one single problem and would be able to execute a single task really well. By definition, they have narrow capabilities, like recommending a product for an e-commerce user or predicting the weather. This is the only kind of Artificial Intelligence that exists today. They’re able to come close to human functioning in very specific contexts, and even surpass them in many instances, but only excelling in very controlled environments with a limited set of parameters.

To build a strong AI foundation, you can also upskill with the help of the free online course offered by Great Learning Academy on Introduction to Artificial Intelligence. With the help of this course, you can learn all the basic concepts required for you to build a career in AI.

What is Artificial General Intelligence (AGI)?

AGI is still a theoretical concept. It’s defined as AI which has a human-level of cognitive function, across a wide variety of domains such as language processing, image processing, computational functioning and reasoning and so on.

We’re still a long way away from building an AGI system. An AGI system would need to comprise of thousands of Artificial Narrow Intelligence systems working in tandem, communicating with each other to mimic human reasoning. Even with the most advanced computing systems and infrastructures, such as Fujitsu’s K or IBM’s Watson, it has taken them 40 minutes to simulate a single second of neuronal activity. This speaks to both the immense complexity and interconnectedness of the human brain, and to the magnitude of the challenge of building an AGI with our current resources.

What is Artificial Super Intelligence (ASI)?

We’re almost entering into science-fiction territory here, but ASI is seen as the logical progression from AGI. An Artificial Super Intelligence (ASI) system would be able to surpass all human capabilities. This would include decision making, taking rational decisions, and even includes things like making better art and building emotional relationships.

Once we achieve Artificial General Intelligence, AI systems would rapidly be able to improve their capabilities and advance into realms that we might not even have dreamed of. While the gap between AGI and ASI would be relatively narrow (some say as little as a nanosecond, because that’s how fast Artificial Intelligence would learn) the long journey ahead of us towards AGI itself makes this seem like a concept that lies far into the future. Check out this course on how to Build a career in ai.

Difference between Augmentation and AI

| Artificial Intelligence | Augmented Intelligence |

| AI replaces humans and operates with high accuracy. | Augmentation does not replace people but creates systems that help in manufacturing. |

| Replaces human decision making | Augments human decision making |

| Robots/Industrial IoT: Robots will replace all humans on the factory floor. | Robots/Industrial IoT: Collaborative robots work along with humans to handle tasks that are hard and repetitive. |

| Real-Time Applications of AI in Customer Success 1. Automated Customer Support and Chatbots 2. Virtual Assistants Automated Workflows | Real-Time Applications of IA in Customer Success 1. IA-enabled customer analytics 2. Discover high-risk/high-potential customers 3. Forecasts Sales |

Strong and Weak Artificial Intelligence

Extensive research in Artificial Intelligence also divides it into two more categories, namely Strong Artificial Intelligence and Weak Artificial Intelligence. The terms were coined by John Searle in order to differentiate the performance levels in different kinds of AI machines. Here are some of the core differences between them.

| Weak AI | Strong AI |

| It is a narrow application with a limited scope. | It is a wider application with a more vast scope. |

| This application is good at specific tasks. | This application has incredible human-level intelligence. |

| It uses supervised and unsupervised learning to process data. | It uses clustering and association to process data. |

| Example: Siri, Alexa. | Example: Advanced Robotics |

What is the Purpose of Artificial Intelligence?

The purpose of Artificial Intelligence is to aid human capabilities and help us make advanced decisions with far-reaching consequences. That’s the answer from a technical standpoint. From a philosophical perspective, Artificial Intelligence has the potential to help humans live more meaningful lives devoid of hard labour, and help manage the complex web of interconnected individuals, companies, states and nations to function in a manner that’s beneficial to all of humanity.

Currently, the purpose of Artificial Intelligence is shared by all the different tools and techniques that we’ve invented over the past thousand years – to simplify human effort, and to help us make better decisions. Artificial Intelligence has also been touted as our Final Invention, a creation that would invent ground-breaking tools and services that would exponentially change how we lead our lives, by hopefully removing strife, inequality and human suffering.

That’s all in the far future though – we’re still a long way from those kinds of outcomes. Currently, Artificial Intelligence is being used mostly by companies to improve their process efficiencies, automate resource-heavy tasks, and make business predictions based on hard data rather than gut feelings. As all technology has come before this, the research and development costs need to be subsidised by corporations and government agencies before it becomes accessible to everyday laymen. To learn more about the purpose of artificial intelligence and where it is used, you can take up an AI course and understand the artificial intelligence course details and upskill today.

Where is Artificial Intelligence (AI) Used?

AI is used in different domains to give insights into user behaviour and give recommendations based on the data. For example, Google’s predictive search algorithm used past user data to predict what a user would type next in the search bar. Netflix uses past user data to recommend what movie a user might want to see next, making the user hooked onto the platform and increasing watch time. Facebook uses past data of the users to automatically give suggestions to tag your friends, based on the facial features in their images. AI is used everywhere by large organisations to make an end user’s life simpler. The uses of Artificial Intelligence would broadly fall under the data processing category, which would include the following:

- Searching within data, and optimising the search to give the most relevant results

- Logic-chains for if-then reasoning, that can be applied to execute a string of commands based on parameters

- Pattern-detection to identify significant patterns in large data set for unique insights

- Applied probabilistic models for predicting future outcomes

What are the Advantages of Artificial Intelligence?

There’s no doubt in the fact that technology has made our life better. From music recommendations, map directions, and mobile banking to fraud prevention, AI and other technologies have taken over. There’s a fine line between advancement and destruction. There are always two sides to a coin, and that is the case with AI as well. Let us take a look at some advantages of Artificial Intelligence-

Advantages of Artificial Intelligence (AI)

- Reduction in human error

- Available 24×7

- Helps in repetitive work

- Digital assistance

- Faster decisions

- Rational Decision Maker

- Medical applications

- Improves Security

- Efficient Communication

Let’s take a closer look.

Prerequisites for Artificial Intelligence

As a beginner, here are some of the basic prerequisites that will help get started with the subject.

- A strong hold on Mathematics – namely Calculus, Statistics and probability.

- A good amount of experience in programming languages like Java, or Python.

- A strong hold in understanding and writing algorithms.

- A strong background in data analytics skills.

- A good amount of knowledge in discrete mathematics.

- The will to learn machine learning languages.

History of Artificial Intelligence(AI)

Artificial Intelligence technology is much older than you would imagine and the term “AI” is not new for researchers. The term “AI” was first coined at Dartmouth college in 1956 by a scientist called Marvin Minsky.

Getting certified in AI will give you an edge over the other aspirants in this industry. With advancements such as Facial Recognition, AI in Healthcare, Chat-bots, and more, now is the time to build a path to a successful career in Artificial Intelligence. Virtual assistants have already made their way into everyday life, helping us save time and energy. Self-driving cars by Tech giants like Tesla have already shown us the first step to the future. AI can help reduce and predict the risks of climate change, allowing us to make a difference before it’s too late. And all of these advancements are only the beginning, there’s so much more to come. 133 million new Artificial Intelligence jobs are said to be created by Artificial Intelligence by the year 2023.

Ancient Greek mythology included intelligent robots and artificial entities for the first time. The creation of syllogism and its application of deductive reasoning by Aristotle was a watershed point in humanity’s search to comprehend its own intelligence. Despite its long and deep roots, artificial intelligence as we know it today has only been around for less than a century.

Let us take a look at the important timeline of events related to artificial intelligence:

1943 – Warren McCulloch and Walter Pits published the paper “A Logical Calculus of Ideas Immanent in Nervous Activity” which was the first work on artificial intelligence (AI) in 1943. They suggested an artificial neuron model.

1949 – Donald Hebb proposed the theory for modifying connection strength between neurons in his book The Organization of Behavior: A Neuropsychological Theory

1950 – Alan Turing, an English mathematician published “Computing Machinery and Intelligence” in which he proposed a test to determine if a machine has the ability to exhibit human behavior. This test is famously knows as the Turin Test.

In the same year, Harvard graduates Marvin Minsky and Dean Edmonds built the first neural network computer named SNARC.

1956 – The “first artificial intelligence program” named “Logic Theorist” was constructed by Allen Newell and Herbert A. Simon. This program verified 38 of 52 mathematical theorems, as well as discovering new and more elegant proofs for several of them.

In the same year, the word “Artificial Intelligence” was first adopted by John McCarthy, an American scientist at the Dartmouth Conference and was coined for the first time as an academic field.

The enthusiasm towards Artificial Intelligence grew rapidly after this year.

1959 – Arthur Samuel coined the term machine learning while he was working at IBM.

1963 – John McCarthy started an Artificial Intelligence Lab at Stanford.

1966 – Joseph Weizenbaum created the first ever chatbot named ELIZA.

1972 – The first humanoid robot was built in Japan named WABOT-1.

1974 to 1980 – This period is famously knows as the first AI winter period. Lot of scientists could not pursue/continue their research to the best extent as they fell short of funding from the government and the interest towards AI gradually declined.

1980 – AI was back with a bang! Digital Equipment Corporations developed R1 which was the first successful commercial expert system and officially ended the AI winter period.

In the same year, the first ever national conference of American Association of Artificial Intelligence was organized at Stanford University.

1987 to 1993 – With emerging computer technology and cheaper alternatives, many investors and the government stopped funding for AI research leading to the second AI Winter period.

1997 – A computer beats human! IBM’s computer IBM Deep Blue defeated the then world chess champion, Gary Kasparov, and became the first computer/machine to beat a world chess champion.

2002 – The inception of vacuum cleaners made AI enter homes.

2005 – The American military started investing in autonomous robots such as Boston Dynamics’ “Big Dog” and iRobot’s “PackBot.”

2006 – Companies like Facebook, Google, Twitter, Netflix started using AI.

2008 – Google made a breakthroughs in speech recognition and introduced the speech recognition feature in the iPhone app.

2011 – Watson – an IBM computer, won Jeopardy in 2011, a game show in which it had to solve complicated questions and riddles. Watson had demonstrated that it could comprehend plain language and solve complex problems fast.

2012 – Andrew Ng, the Google Brain Deep Learning project’s founder, fed 10 million YouTube videos into a neural network using deep learning algorithms. The neural network learnt to recognise a cat without being informed what a cat is, which marked the beginning of a new era in deep learning and neural networks.

2014 – Google made the first self-driving car which passed the driving test.

2014 – Amazon’s Alexa was released.

2016 – Hanson Robotics created the first “robot citizen,” Sophia, a humanoid robot capable of facial recognition, verbal conversation, and facial emotion.

2020 – During the early phases of the SARS-CoV-2 pandemic, Baidu made its LinearFold AI algorithm available to scientific and medical teams seeking to create a vaccine. The system could anticipate the virus’s RNA sequence in just 27 seconds, which was 120 times faster than prior methods.

As each day progresses, Artificial Intelligence is making rapid advancements in all fields. AI is no longer the future, it is the present!

AI in Everyday life

Here is a list of AI applications that you may use in everyday life:

Online shopping: Artificial intelligence is used in online shopping to provide personalised recommendations to users, based on their previous searches and purchases.

Digital personal assistants: Smartphones use AI to provide personalised services. AI assistants can answer questions and help users to organise their daily routines without a hassle. Check out AI as a service here.

Machine translations: AI-based language translation software provides translations, subtitling and language detection which can help users to understand other languages.

Cybersecurity: AI systems can help recognise and fight cyberattacks based on recognising patterns and backtracking the attacks.

Artificial intelligence against Covid-19: In the case of Covid-19, AI has been used in identifying outbreaks, processing healthcare claims, and tracking the spread of the disease.

Applications of Artificial Intelligence in Business

AI truly has the potential to transform many industries, with a wide range of possible use cases. What all these different industries and use cases have in common, is that they are all data-driven. Since Artificial Intelligence is an efficient data processing system at its core, there’s a lot of potential for optimisation everywhere.

Let’s take a look at the industries where AI is currently shining.

- Administration: AI systems are helping with the routine, day-to-day administrative tasks to minimise human errors and maximise efficiency. Transcriptions of medical notes through NLP and helps structure patient information to make it easier for doctors to read it.

- Telemedicine: For non-emergency situations, patients can reach out to a hospital’s AI system to analyse their symptoms, input their vital signs and assess if there’s a need for medical attention. This reduces the workload of medical professionals by bringing only crucial cases to them.

- Assisted Diagnosis: Through computer vision and convolutional neural networks, AI is now capable of reading MRI scans to check for tumours and other malignant growths, at an exponentially faster pace than radiologists can, with a considerably lower margin of error.

- Robot-assisted surgery: Robotic surgeries have a very minuscule margin-of-error and can consistently perform surgeries round-the-clock without getting exhausted. Since they operate with such a high degree of accuracy, they are less invasive than traditional methods, which potentially reduces the time patients spend in the hospital recovering.

- Vital Stats Monitoring: A person’s state of health is an ongoing process, depending on the varying levels of their respective vitals stats. With wearable devices achieving mass-market popularity now, this data is not available on tap, just waiting to be analysed to deliver actionable insights. Since vital signs have the potential to predict health fluctuations even before the patient is aware, there are a lot of live-saving applications here.

- Better recommendations: This is usually the first example that people give when asked about business applications of AI, and that’s because it’s an area where AI has delivered great results already. Most large e-commerce players have incorporated Artificial Intelligence to make product recommendations that users might be interested in, which has led to considerable increases in their bottom-lines.

- Chatbots: Another famous example, based on the proliferation of Artificial Intelligence chatbots across industries, and every other website we seem to visit. These chatbots are now serving customers in odd-hours and peak hours as well, removing the bottleneck of limited human resources.

- Filtering spam and fake reviews: Due to the high volume of reviews that sites like Amazon receive, it would be impossible for human eyes to scan through them to filter out malicious content. Through the power of NLP, Artificial Intelligence can scan these reviews for suspicious activities and filter them out, making for a better buyer experience.

- Optimising search: All of the e-commerce depends upon users searching for what they want, and being able to find it. Artificial Intelligence has been optimising search results based on thousands of parameters to ensure that users find the exact product that they are looking for.

- Supply-chain: AI is being used to predict demand for different products in different timeframes so that they can manage their stocks to meet the demand.

- Building work culture: AI is being used to analyse employee data and place them in the right teams, assign projects based on their competencies, collect feedback about the workplace, and even try to predict if they’re on the verge of quitting their company.

- Hiring: With NLP, AI can go through thousands of CV in a matter of seconds, and ascertain if there’s a good fit. This is beneficial because it would be devoid of any human errors or biases, and would considerably reduce the length of hiring cycles.

Robots in AI

The field of robotics has been advancing even before AI became a reality. At this stage, artificial intelligence is helping robotics to innovate faster with efficient robots. Robots in AI have found applications across verticals and industries especially in the manufacturing and packaging industries. Here are a few applications of robots in AI:

Assembly

- AI along with advanced vision systems can help in real-time course correction

- It also helps robots to learn which path is best for a certain process while its in operation

Customer Service

- AI-enabled robots are being used in a customer service capacity in retail and hospitality industries

- These robots leverage Natural Language Processing to interact with customers intelligently and like a human

- More these systems interact with humans, more they learn with the help of machine learning

Packaging

- AI enables quicker, cheaper, and more accurate packaging

- It helps in saving certain motions that a robot is making and constantly refines them, making installing and moving robotic systems easily

Open Source Robotics

- Robotic systems today are being sold as open-source systems having AI capabilities.

- In this way, users can teach robots to perform custom tasks based on a specific application

- Eg: small scale agriculture

What Makes AI Technology So Useful?

Artificial intelligence offers several critical benefits that make it an excellent tool, such as:

- Automation – AI can automate tedious processes/tasks, without any fatigue.

- Enhancement – AI can enhance all the products and services effectively by improving experiences for end-users and delivering better product recommendations.

- Analysis and Accuracy– AI analysis is much faster and more accurate than humans. AI can use its ability to interpret data with better decisions.

Simply put, AI helps organizations to make better decisions, enhancing product and business processes at a much faster pace.

Career Trends in Artificial Intelligence

Careers in Artificial Intelligence have shown steady growth over the past few years and will continue to grow at an accelerating rate. 57% of Indian companies are looking to hire the right talent to match the market requirements. Aspirants who have successfully transitioned into an AI role have seen an average hike in salary of 60-70%. Mumbai stands tall in competition and is followed by Bangalore and Chennai. According to WEF, 133 million jobs will be created in AI by the year 2020. Research states that the demand for jobs has increased but the workforce has not been able to keep pace with it.

AI is being used in various sectors such as healthcare, banking and finance, marketing and the entertainment industry. Deep Learning Engineer, Data Scientist, Director of Data Science and Senior Data Scientist are some of the top jobs that require AI Skills.

With the increase in opportunities available, it’s safe to say that now is the right time to upskill in this domain.

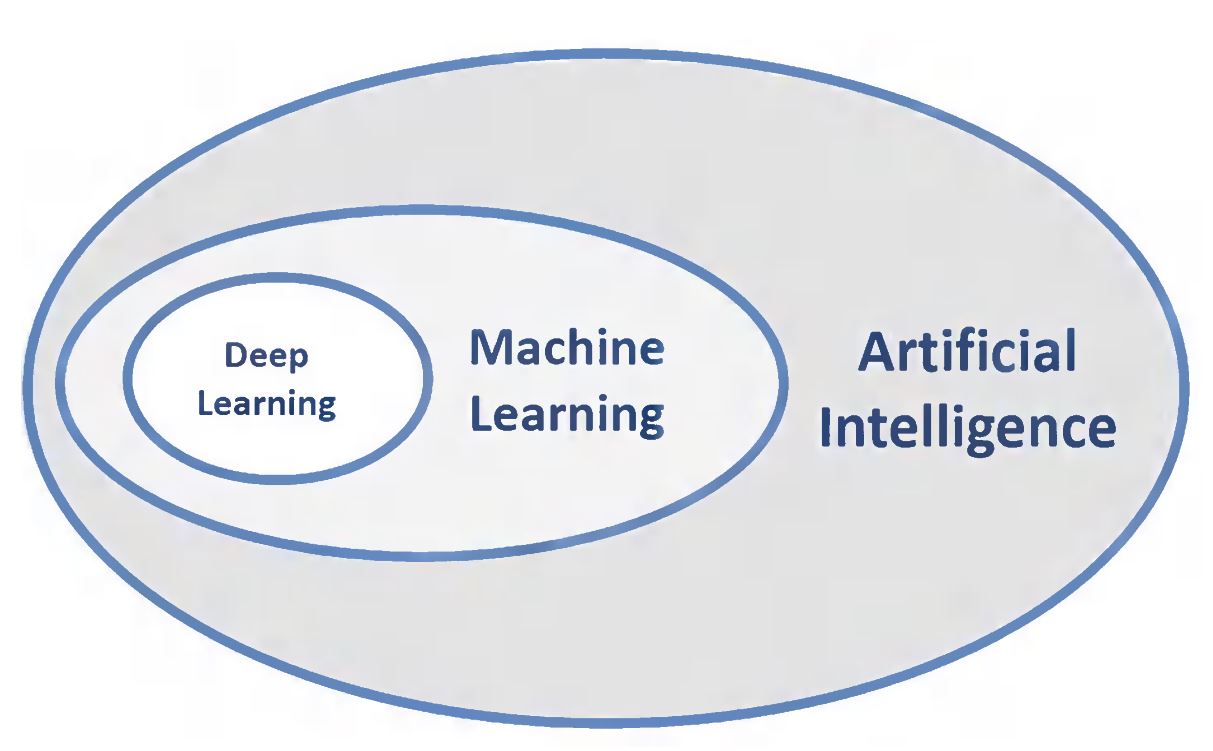

What is the relationship between AI, ML, and DL?

As the above image portrays, the three concentric ovals describe DL as a subset of ML, which is also another subset of AI. Therefore, AI is the all-encompassing concept that initially erupted. It was then followed by ML that thrived later, and lastly DL that is now promising to escalate the advances of AI to another level.

Examples of Artificial Intelligence

- Facebook Watch

- Facebook Friends Recommendations

- Siri, Alexa and other smart assistants

- Self-driving cars

- Robo-advisors

- Conversational bots

- Email spam filters

- Netflix’s recommendations

- Proactive healthcare management

- Disease mapping

- Automated financial investing

- Virtual travel booking agent

- Social media monitoring

Future of Artificial Intelligence

As humans, we have always been fascinated by technological changes and fiction, right now, we are living amidst the greatest advancements in our history. Artificial Intelligence has emerged to be the next big thing in the field of technology. Organizations across the world are coming up with breakthrough innovations in artificial intelligence and machine learning. Artificial intelligence is not only impacting the future of every industry and every human being but has also acted as the main driver of emerging technologies like big data, robotics and IoT. Considering its growth rate, it will continue to act as a technological innovator for the foreseeable future. Hence, there are immense opportunities for trained and certified professionals to enter a rewarding career. As these technologies continue to grow, they will have more and more impact on the social setting and quality of life.

Career Opportunities in AI

- AI & ML Developer/Engineer

AI & ML Engineer/Developer is responsible for performing statistical analysis, running statistical tests, and implementing statistical designs. Furthermore, they develop deep learning systems, manage ML programs, implement ML algorithms, etc.

So, basically, they deploy AI & ML-based solutions for the company. For becoming n AI & ML developer, you will need good programming skills in Python, Scala, and Java. You get to work on frameworks like Azure ML Studio, Apache Hadoop, Amazon ML, etc. If you proceed on the set ai engineer learning path, success is all yours! The average salary of an AI engineer in India is found to be ranging from INR 4 Lakhs p.a. to INR 20 Lakhs p.a.

- AI Analyst/Specialist

The role of an ai analyst or specialist is similar to that of an ai engineer. The key responsibility is to cater to AI-oriented solutions and schemes to enhance the services delivered by a certain industry using the data analyzing skills to study the trends and patterns of certain datasets. Whether you talk about the healthcare industry, finance industry, geology sector, cyber security, or any other sector, AI analysts or specialists are seen to have quite a good impact all over. An AI Analyst/Specialist must have a good programming, system analysis, and computational statistics background. A bachelor’s or equivalent degree can help you land an entry-level position, but a master’s or equivalent degree is a must for the core AI analyst positions. The average salary of an ai analyst can be anywhere between INR 3 Lakhs per year and 10 Lakhs per year, based on the years of experience and company you are working for.

- Data Scientist

Owing to the huge demand for data scientists, there are high chances that you are already familiar with the term. The role of a data scientist involves identifying valuable data streams and sources, working along with the data engineers for the automation of data collection processes, dealing with big data, analyzing massive amounts of data to learn the trends and patterns for developing predictive ML models. A data scientist is also responsible for coming up with solutions and strategies for the decision-makers with the help of intriguing visualization tools and techniques. SQL, Python, Scala, SAS, SSAS, and R are the most useful tools to a data scientist. They are required to work on frameworks such as Amazon ML, Azure ML Studio, Spark MLlib, and so on. The average salary of a data scientist in India is INR 5-22 Lakhs per year, depending on their experience and the company they are hired in.

- Research Scientist

Research Scientist is one of the other fascinating artificial intelligence jobs. This ai job position holds responsibilities related to researching the field of Artificial Intelligence and Machine Learning to innovate and discover AI-oriented solutions to real-world problems. As we know, research in whatever streams it may be demands core expertise. Likewise, the role of a research scientist calls for mastery in various AI disciplines such as Computational Statistics, Applied Mathematics, Deep Learning, Machine Learning, and Neural Networks. A research scientist is expected to have Python, Scala, SAS, SSAS, and R programming skills. Apache Hadoop, Apache Signa, Scikit learn, H20 are some common frameworks to work on as a research scientist. An advanced master’s or doctoral degree is a must for becoming an AI research scientist. As per the current studies, an AI research scientist earns a minimum of INR 35 Lakhs annually in India.

- Product Manager

Nowadays, in every leading company, the job of a product manager incorporates a significant role of artificial intelligence. Resolving challenging issues by strategically collecting data falls under the duty of a product manager. You are supposed to have the skill of identifying relevant business impeding problems and further gather related datasets for data interpretation. Once the data interpretation is made, the product manager implements effective AI strategies to evaluate the business impacts depicted by the inferences drawn from data interpretation. In view of the crucial job role, every organization needs an efficient product manager. Thus, we can say that a product manager ensures that a product is actively running. One must have good hands-on programming languages like Python, R, SQL, and other essential ones. Initially, the average pay of a product manager is around INR 7-8 Lakhs per anum, which can extend to one Crore in the later years. There is no such thing as a free lunch; similarly, for getting a job as a product manager, you must have an in-depth knowledge of AI-ML, Computer Science, Statistics, Marketing related core concepts. Ultimately, experience, skills, company and locations are the major factors that determine your salary as a product manager.

- Robotics Scientist

Following the lead of global automation trends and the emergence of robotics in the field of ai, we can tell it is definitely a sign of sprouting demand for robotics scientists. In this fast-paced world where technology is becoming the pioneer, robots are indeed stealing the job of people handling manual or repetitive & boring tasks. On the contrary, it is giving employment to professionals having expertise in the field of robotics. In order to build and manage these robotic systems, we need a robotics engineer. To pursue a career as a robotics engineer, you must have a master’s degree in robotics, Computer Science or Engineering. A robotics scientist is among one of the other interesting and high-paying ai careers take upon. Since we are already aware of how complicated robots are, tackling them demands knowledge in different disciplines. If the field of robotics intrigues you and you are good at programming, mechanics, electronics, electrics, sensing, and psychology and cognition to some extent, you are good to go with this career option.

Important FAQs on Artificial Intelligence (AI)

Ques. Where is AI used?

Ans. Artificial Intelligence is used across industries globally. Some of the industries which have delved deep in the field of AI to find new applications are E-commerce, Retail, Security and Surveillance. Sports Analytics, Manufacturing and Production, Automotive among others.

Ques. How is AI helping in our life?

Ans. The virtual digital assistants have changed the way w do our daily tasks. Alexa and Siri have become like real humans we interact with each day for our every small and big need. The natural language abilities and the ability to learn themselves without human interference are the reasons they are developing so fast and becoming just like humans in their interaction only more intelligent and faster.

Ques. Is Alexa an AI?

Ans. Yes, Alexa is an Artificial Intelligence that lives among us.

Ques. Is Siri an AI?

Ans. Yes, just like Alexa Siri is also an artificial intelligence that uses advanced machine learning technologies to function.

Ques. Why is AI needed?

Ans. AI makes every process better, faster, and more accurate. It has some very crucial applications too such as identifying and predicting fraudulent transactions, faster and accurate credit scoring, and automating manually intense data management practices. Artificial Intelligence improves the existing process across industries and applications and also helps in developing new solutions to problems that are overwhelming to deal with manually.

Ques. What is artificial intelligence with examples?

Ans. Artificial Intelligence is an intelligent entity that is created by humans. It is capable of performing tasks intelligently without being explicitly instructed to do so. We make use of AI in our daily lives without even realizing it. Spotify, Siri, Google Maps, YouTube, all of these applications make use of AI for their functioning.

Ques. Is AI dangerous?

Ans. Although there are several speculations on AI being dangerous, at the moment, we cannot say that AI is dangerous. It has benefited our lives in several ways.

Ques. What is the goal of AI?

Ans. The basic goal of AI is to enable computers and machines to perform intellectual tasks such as problem solving, decision making, perception, and understanding human communication.

Ques. What are the advantages of AI?

Ans. There are several advantages of artificial intelligence. They are listed below:

- Available 24×7

- Digital Assistance

- Faster Decisions

- New Inventions

- Reduction in Human Error

- Helps in repetitive jobs

Ques. Who invented AI?

Ans. The term Artificial Intelligence was coined John McCarthy. He is considered as the father of AI.

Ques. Is artificial intelligence the future?

Ans. We are currently living in the greatest advancements of Artificial Intelligence in history. It has emerged to be the next best thing in technology and has impacted the future of almost every industry. There is a greater need for professionals in the field of AI due to the increase in demand. According to WEF, 133 million new Artificial Intelligence jobs are said to be created by Artificial Intelligence by the year 2023. Yes, AI is the future.

Ques. What is AI and its application?

Ans. AI has paved its way into various industries today. Be it gaming, or healthcare. AI is everywhere. Did you now that the facial recognition feature on our phones uses AI? Google Maps also makes use of AI in its application, and it is part of our daily life more than we know it. Spam filters on Emails, Voice-to-text features, Search recommendations, Fraud protection and prevention, Ride-sharing applications are some of the examples of AI and its application.

What’s your view about the future of Artificial Intelligence? Leave your comments below.

Curious to dig deeper into AI, read our blog on some of the top Artificial Intelligence books.